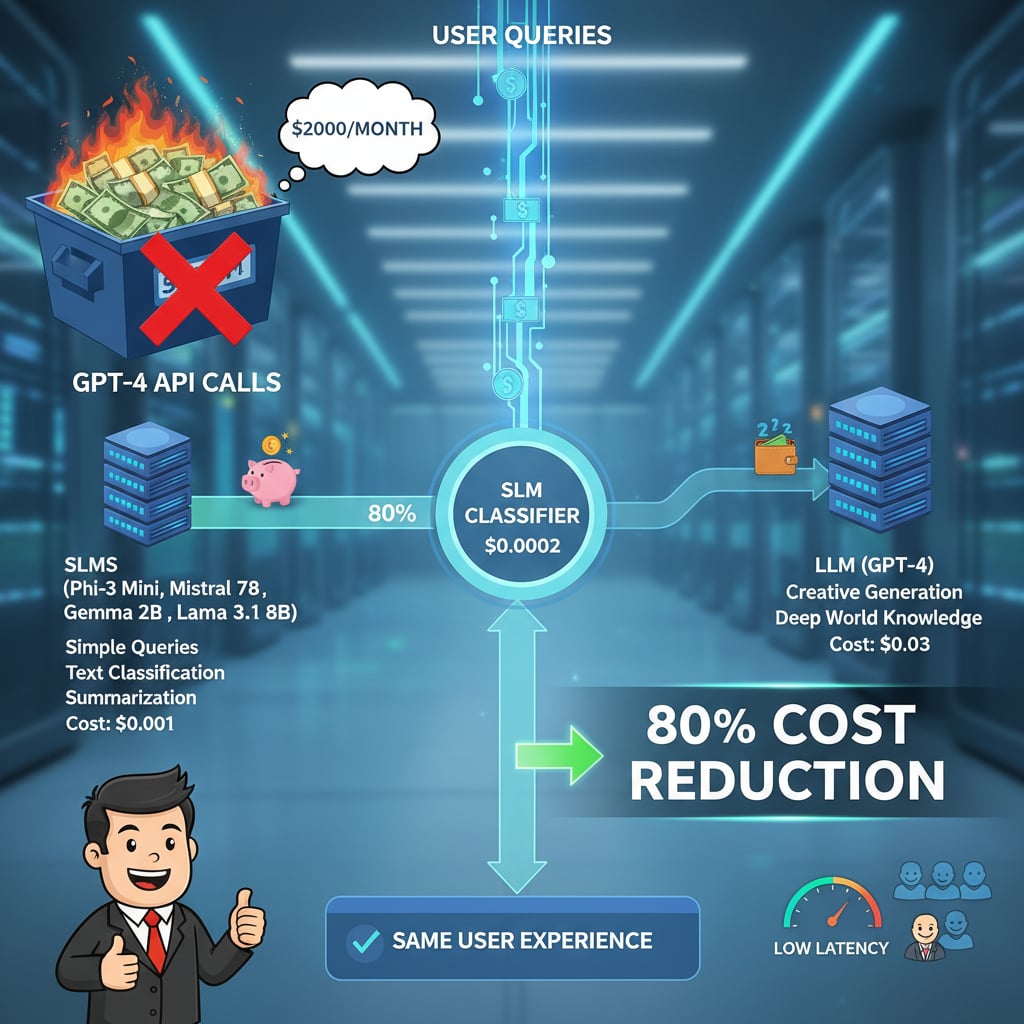

Most teams overspend 10x on LLM APIs by sending every request to GPT-4. Here's the exact routing framework that uses small language models for 80% of traffic and reserves LLMs for the 20% that actually needs them - same user experience, 80%+ cost reduction.

You're Probably Overpaying for AI by 10x

Here is a pattern we see in almost every company that comes to us for AI consulting: they are sending every single API call to GPT-4, Claude Opus, or another frontier model. Customer support queries, text classification, entity extraction, simple lookups - all routed to a $0.03-per-call model when a $0.0002-per-call model would produce identical results.

The numbers are staggering. We have seen teams spending $2,000 per month on LLM API costs when $200 would have given them the same output quality for their use case. That is not an optimization opportunity - that is a structural mistake baked into the architecture from day one.

This article lays out the exact framework we use at TunerLabs to help companies cut their LLM costs by 80% or more - without any degradation in user experience.

The Core Insight: Not Every Task Needs a 70B+ Parameter Model

The AI industry has conditioned teams to believe that bigger is always better. It is not. The right question is never "what is the most powerful model?" - it is "what is the smallest model that solves this task reliably?"

Small Language Models (SLMs) - models in the 2B to 8B parameter range - have become remarkably capable in the last 12 months. For well-defined, narrow tasks, they match or exceed the performance of models 10x their size.

When to Use SLMs (Small Language Models)

SLMs excel at structured, well-scoped tasks where the input and output patterns are predictable:

- Text classification and sentiment analysis - Is this customer review positive, negative, or neutral? Is this support ticket urgent or routine?

- Entity extraction and tagging - Pull names, dates, product codes, and addresses from unstructured text

- Simple summarization - Condense a 500-word email into 3 bullet points

- Routine Q&A from structured data - Answer questions from a knowledge base, FAQ, or database

- Input validation and formatting - Clean, normalize, and validate user inputs before processing

- Language detection and translation routing - Identify the language and route to the correct pipeline

- Content moderation and filtering - Flag inappropriate content before it reaches a human or a downstream model

When You Actually Need LLMs

Reserve your frontier model budget for tasks that genuinely require deep reasoning, broad world knowledge, or creative generation:

- Complex multi-step reasoning - Tasks that require chaining multiple logical steps, weighing trade-offs, or planning

- Nuanced creative generation - Writing that requires voice, tone, cultural context, or stylistic sophistication

- Ambiguous queries with zero-shot context - Requests where the user intent is unclear and the model needs to infer what is being asked

- Tasks requiring deep world knowledge - Questions that need broad factual knowledge not present in your data

- Code generation and debugging - Especially for complex, multi-file, architecturally aware code changes

- Long-document analysis - Synthesizing insights across 50+ pages of content

The Framework: Route, Classify, Escalate

The real game-changer is not choosing between SLMs and LLMs. It is chaining them together in an intelligent routing pipeline.

Here is the architecture:

Step 1: User query comes in

Step 2: SLM classifies intent (cost: ~$0.0002 per request)

The SLM reads the incoming query and classifies it into a complexity tier. This is a simple classification task - exactly what SLMs are built for.

Step 3: Route based on classification

- Simple query? SLM responds directly (cost: ~$0.001 per request)

- Complex query? Escalate to LLM (cost: ~$0.03 per request)

The math:

If 80% of your traffic is simple (and it almost always is), your blended cost per request drops from $0.03 to roughly $0.006. That is an 80% cost reduction with the same user experience.

| Traffic Type | Volume | Cost Without Routing | Cost With Routing |

|---|---|---|---|

| Simple queries | 80% | $0.03 each | $0.001 each |

| Complex queries | 20% | $0.03 each | $0.03 each |

| Classifier overhead | 100% | $0.00 | $0.0002 each |

| Blended cost | 100% | $0.03 | $0.007 |

That is a 76% reduction even with conservative estimates. In practice, we regularly see 80-90% savings because the simple query ratio is often higher than 80%.

The Models to Use Right Now

Here are the SLMs we recommend for production routing in 2026:

Phi-3 Mini (3.8B parameters)

Microsoft's Phi-3 Mini is the most impressive model relative to its size. It punches well above its weight on reasoning benchmarks and runs comfortably on a single GPU or even on-device. Ideal for classification, extraction, and simple Q&A tasks.

Best for: Intent classification, text extraction, input validation

Mistral 7B

The best open-source bang for your buck. Mistral 7B offers strong general-purpose performance that covers a wide range of SLM use cases. The instruction-tuned variant is particularly good for structured tasks.

Best for: Summarization, routine Q&A, content moderation, general-purpose SLM tasks

Gemma 2B

Google's Gemma 2B is lightweight and surprisingly capable. At just 2 billion parameters, it is the most cost-efficient option for high-volume, simple tasks. If your classification task has a well-defined label set, Gemma 2B will handle it at near-zero cost.

Best for: High-volume classification, sentiment analysis, language detection

Llama 3.1 8B

Meta's Llama 3.1 8B is the ceiling of what you can get from an SLM. It handles tasks that sit in the grey zone between simple and complex - structured reasoning, multi-step extraction, and moderate summarization. Use it as your "mid-tier" model in a 3-tier routing architecture.

Best for: Mid-complexity tasks, structured reasoning, the "grey zone" between SLM and LLM

Building the Routing Layer: Architecture Patterns

Pattern 1: Binary Router (Simple vs. Complex)

The simplest implementation. A classifier model reads the incoming query and outputs a binary decision: route to SLM or route to LLM.

- Classifier: Fine-tuned Gemma 2B or Phi-3 Mini

- SLM responder: Mistral 7B or Llama 3.1 8B

- LLM responder: GPT-4, Claude Opus, or Gemini Pro

This handles the majority of use cases and can be implemented in a single afternoon.

Pattern 2: Multi-Tier Router

For more granular control, use a 3-tier architecture:

- Tier 1 (Micro): Gemma 2B - handles the simplest 50% of traffic (lookups, classification, validation)

- Tier 2 (Mid): Llama 3.1 8B - handles the next 30% (summarization, extraction, structured Q&A)

- Tier 3 (Full): Frontier LLM - handles the remaining 20% (reasoning, creative, ambiguous)

This maximizes cost savings but adds routing complexity.

Pattern 3: Confidence-Based Escalation

Instead of classifying the query upfront, let the SLM attempt a response and measure its confidence. If confidence is below a threshold, escalate to the LLM.

- SLM generates a response with a confidence score

- If confidence is above 0.85, serve the SLM response

- If confidence is below 0.85, pass the query (with SLM context) to the LLM

This pattern catches edge cases that a binary classifier might miss, but adds latency for escalated queries.

Real-World Cost Impact

Here is what the cost optimization looks like for a company processing 100,000 LLM API calls per month:

| Metric | Before Routing | After Routing |

|---|---|---|

| Monthly API calls | 100,000 | 100,000 |

| All routed to | GPT-4 ($0.03/call) | SLM + LLM mix |

| Monthly cost | $3,000 | $400-700 |

| Annual cost | $36,000 | $4,800-8,400 |

| Annual savings | - | $27,600-31,200 |

For companies processing 1 million calls per month, the savings scale to $250,000-300,000 per year. At that scale, the routing infrastructure pays for itself in the first week.

Common Mistakes to Avoid

Mistake 1: Fine-Tuning When Prompting Works

Do not fine-tune an SLM for a classification task until you have tried few-shot prompting first. Many teams spend weeks fine-tuning when 5 well-chosen examples in the prompt would have achieved 95%+ accuracy.

Mistake 2: Routing on Input Length Instead of Complexity

Long inputs are not inherently complex. A 2,000-word customer email might just need a simple sentiment classification. Route based on task complexity, not input size.

Mistake 3: Not Monitoring the SLM Failure Rate

Set up monitoring to track how often the SLM produces incorrect or low-quality responses. If the SLM failure rate exceeds 5%, either retrain the classifier or adjust the routing threshold.

Mistake 4: Ignoring Latency

SLMs are faster than LLMs - often 5-10x faster. This means your routed architecture does not just save money, it also improves response times for the 80% of queries handled by SLMs. Make sure you are measuring and reporting this latency improvement.

Mistake 5: Defaulting to the Newest Model

Every time a new frontier model launches, teams rush to upgrade. Unless the new model demonstrably improves your specific task, stay on your current SLM. Stability and cost predictability matter more than chasing benchmarks.

The Bottom Line

The companies winning at AI in 2026 are not the ones using the biggest model. They are the ones using the right model for the right task.

Intelligent routing is not an advanced optimization - it is table stakes for any production AI system. If you are sending every API call to a frontier model, you are leaving 80% of your budget on the table.

The Quick-Start Checklist

- Audit your current API traffic: What percentage of calls are simple, structured tasks?

- Pick an SLM for your classifier (Phi-3 Mini or Gemma 2B)

- Pick an SLM for your responder (Mistral 7B or Llama 3.1 8B)

- Build a binary router: simple → SLM, complex → LLM

- Monitor SLM accuracy and adjust the routing threshold

- Measure cost savings weekly and report to leadership

Your CFO will thank you. Your latency will thank you. Your users will not notice the difference.

Need help building an intelligent model routing architecture? Talk to TunerLabs - we design and implement cost-optimized AI systems for production. From SLM fine-tuning to multi-tier routing pipelines, we engineer the infrastructure that makes AI economically viable at scale.

Topics:

Master Claude Code

The complete architecture guide — Skills, Agents, Memory & the full Tools reference. Everything in one beautiful page.